The Evolution of Spatial Computing in Spine Surgery: Tracing the Historical Arc to Present Day Implementation. World Neurosurgery.

https://doi.org/10.1016/j.wneu.2025.124514Spatial computing

Spatial computing combines technologies that integrate digital information with the physical world, including augmented reality (AR), virtual reality (VR), artificial intelligence (AI), advanced imaging, and robotics. In surgery, these tools enable clinicians to visualize and manipulate anatomical data in three dimensions. They can be applied in both preoperative planning and intraoperative settings, providing better spatial understanding.

The beginnings of the medical imaging

The first efforts date back to 1895, when Roentgen discovered X-rays. This discovery was soon applied in radiology to locate foreign objects in patients, such as needles and bullets. In 1908, another important advancement followed. Originally tested on monkeys, Horsley and Clarke introduced a stereotactic device that applied a Cartesian coordinate system to the brain using an external frame. This technique contributed to the development of neurosurgery and remains fundamental to this day, as it allows more accurate targeting of specific brain areas.

Next developments

In the 1970s, Godfrey Hounsfield introduced computed tomography (CT) scanning, which allowed tissue densities to be represented as standardized numerical values, known as Hounsfield units. By the late 1980s and early 1990s, frameless navigation systems freed surgeons from traditional stereotactic frames. Subsequent innovations in advanced spine navigation, such as the articulated arm, StealthStation, and NeuroStation, enabled frameless registration, real-time tracking, and integration of CT, MRI, and fluoroscopy. After receiving FDA clearance in 1996, the StealthStation system was widely adopted for cranial and spinal surgeries.

Modern imaging techniques

Intraoperative imaging plays an important role in spine surgery, giving surgeons a real-time view that helps guide their work. Of the many imaging methods available, CT and fluoroscopy are the most commonly used in neurosurgery and orthopedics. However, both patients and surgical teams are exposed to radiation, making dose optimization and protective measures essential to reduce long-term health risks.

Newer technologies, including solid-state CT and robot-assisted platforms, improve accuracy and support minimally invasive procedures. However, high costs and specialized training can limit their widespread use.

Modern imaging devices

Modern intraoperative imaging devices combine high-resolution 2D and 3D imaging with advanced navigation capabilities. They integrate multiple imaging modalities, such as cone beam or fan beam CT and fluoroscopy.

These systems can provide dynamic, real-time or near real-time visualizations, enabling surgeons to observe subtle anatomical shifts. Some devices also incorporate robotic assistance allowing reduced radiation exposure, and improved procedural accuracy, particularly for complex spinal and orthopedic surgeries.

Robotic surgery

Robotic-assisted spine surgery uses robotic arms integrated with navigation systems to improve surgical precision and instrument guidance. These systems require an operator and are not autonomous devices. They allow surgeons to perform minimally invasive procedures with greater accuracy, such as precise screw placement, while reducing radiation exposure and enhancing stability during operations. Early systems like the da Vinci, which received FDA approval in 2000, paved the way for spinal applications. Modern models like MazorX, ExcelsiusGPS, and ROSA Spine combine preoperative planning with intraoperative guidance to optimize surgical outcomes.

Preoperative planning in spine surgery

Today, preoperative planning in spine surgery relies on advanced software powered by neural networks and machine learning. Platforms like Surgimap and UNiD use patient imaging to create detailed 3D models, allowing evaluating spinopelvic parameters, planning osteotomies, and selecting appropriate implants.

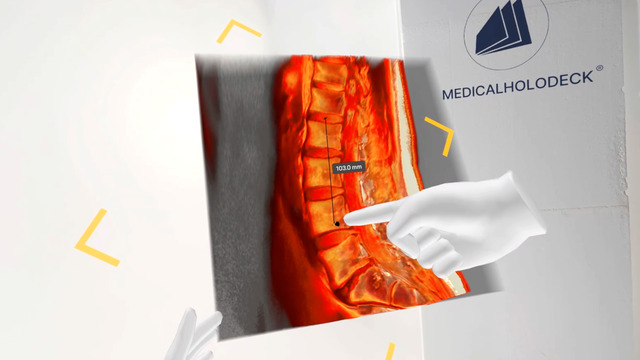

A newer platform for preoperative planning is VR. It provides surgeons with an immersive 3D environment to interact with patient-specific anatomy, which improves spatial understanding and surgical strategy. Within VR, surgeons can select and adjust tissue densities, virtually try out implants or osteotomies. This allows them to anticipate challenges before entering the operating room. These platforms also support collaborative planning, enabling multiple clinicians to review and discuss the surgical plan together, even from remote locations.

Intraoperative spatial imaging

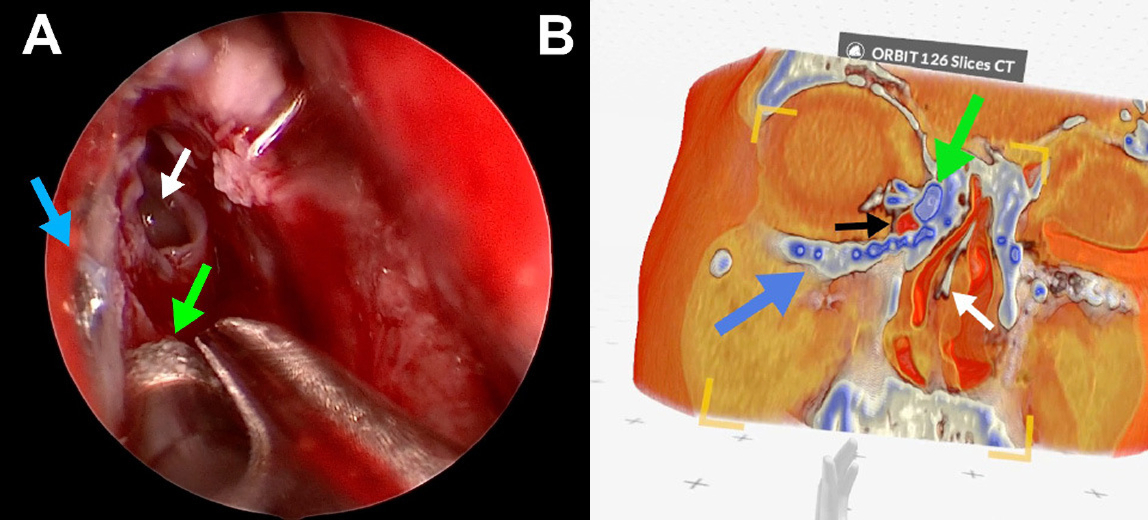

AR emerged with the widespread availability of smartphones and head-mounted devices, overlaiding digital information onto the physical world. In spine surgery AR is used to superimpose 3D images of a patient’s spine over their real anatomy, enabling surgeons to “see through” tissues and guide instrumentation.

Mixed reality (MR) extends AR by allowing interaction between virtual and physical elements. In the operating room, MR enables surgeons to manipulate 3D virtual content in real time, providing enhanced guidance for tasks such as pedicle screw placement or alignment verification. While MR is less widely implemented than AR, it has significant potential to improve precision, spatial understanding, and workflow efficiency in spinal procedures.

AI segmentation

AI segmentation in spine surgery uses artificial intelligence to automatically identify and differentiate between various tissues in medical images. Unlike traditional methods that required manual segmentation, this process can now be completed in under a minute, bringing out clinically relevant insights from raw 3D images.

Advanced tools can segment over 100 anatomical structures from CT scans, enabling fast and consistent identification of organs, vessels, and other critical tissues, which supports more precise planning and intraoperative guidance.

How to get started

Medicalholodeck integrates with secure hospital systems, providing PACS access, HIPAA-compliant data handling, and full patient data security. It runs on stereoscopic 3D displays, VR headsets, mobile devices, and standard Windows systems, enabling flexible use in hospitals, classrooms, and training centers.

Specialized features for surgical planning are exclusive to Medical Imaging XR PRO. Currently, Medicalholodeck is available only for educational use. The platform is undergoing FDA and CE certification, and we expect Medical Imaging XR PRO to be available soon in the U.S. and EU markets.

For more information, contact info@medicalholodeck.com February 2026