Integrating AI Segmentation, Simulated Digital Twins, and Extended Reality into Medical Education: A Narrative Technical Review and Proof-of-Concept Case Study. Journal of Personalized Medicine, 16(4), 202.

https://doi.org/10.3390/jpm16040202From imaging data to digital twins

Medical imaging has long been limited to cross-sectional, two-dimensional views. CT and MRI provide highly detailed data, but interpretation requires experience, time, and mental spatial reconstruction by the surgeon. Recent advances in AI are fundamentally changing this process.

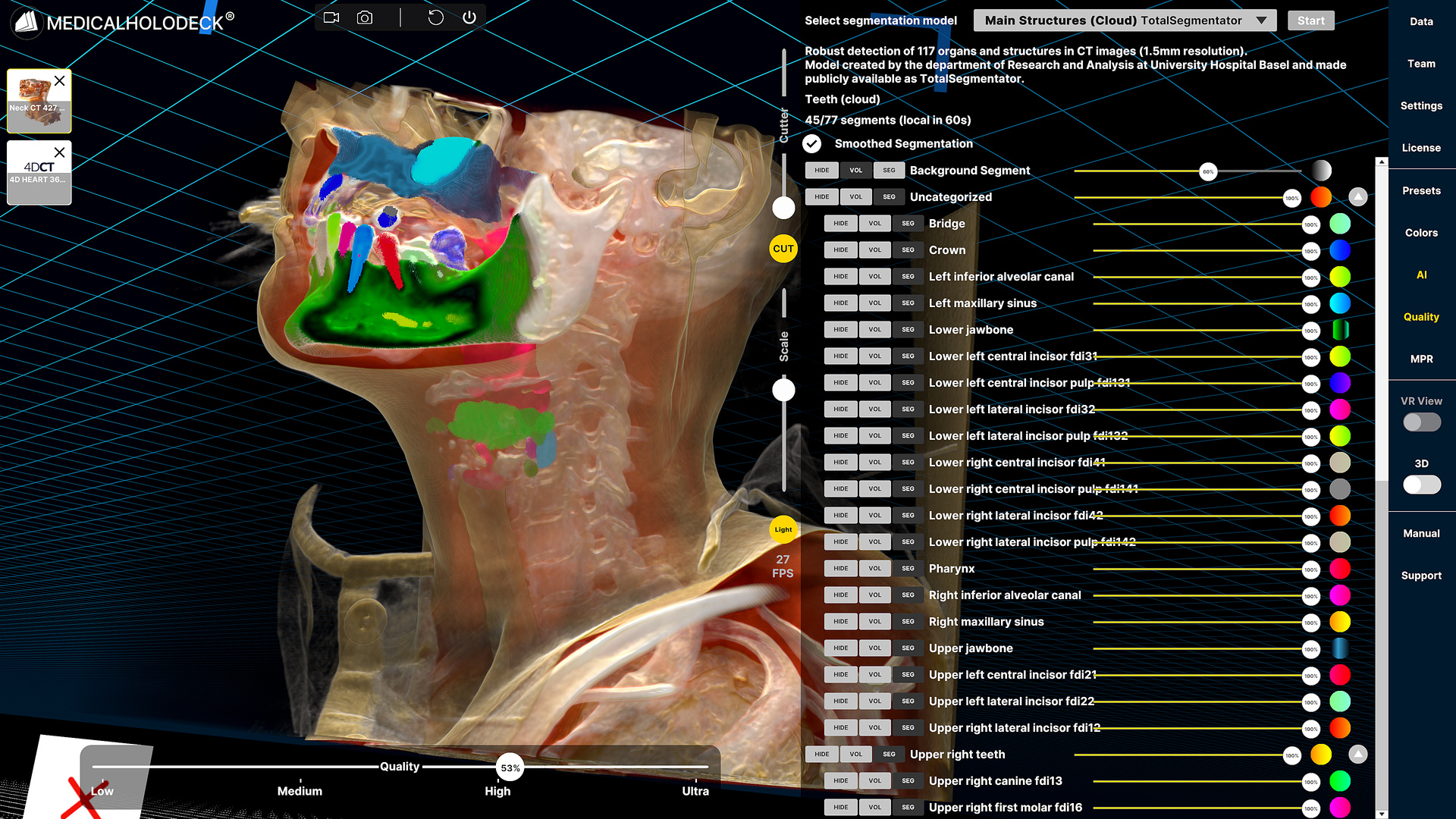

AI segmentation significantly reduces the time required to prepare imaging data. What previously required extensive manual processing can now be generated automatically. Anatomical structures such as organs, vessels, and regions of interest are identified and separated directly from CT and MRI datasets within seconds, creating structured and usable data without manual effort.

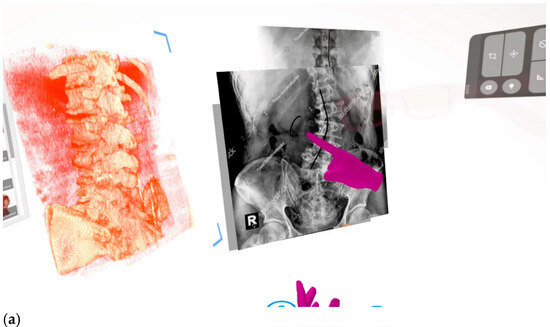

Shows a sample from the recorded lesson where the learner stands in the direct field of view of the instructor looking at the 3D rendered scoliosis model with side-by-side images of X-ray scoliosis anatomy. The magenta hands in the picture represent the recorded instructor drawing on the digital assets as the lesson plays.

These segmented datasets form the foundation for digital twins, patient-specific 3D models that represent real anatomy. Instead of interpreting individual slices, medical professionals and students can interact with complete anatomical structures in space, improving spatial understanding and reducing cognitive load.

As AI segmentation automates model creation, the generation of digital twins becomes scalable, enabling broader adoption across educational programs and institutions.

Improving understanding and communication

Digital twins fundamentally change how medical data is used and shared. Complex anatomical relationships become directly visible, and pathologies can be isolated and explored within their full spatial context.

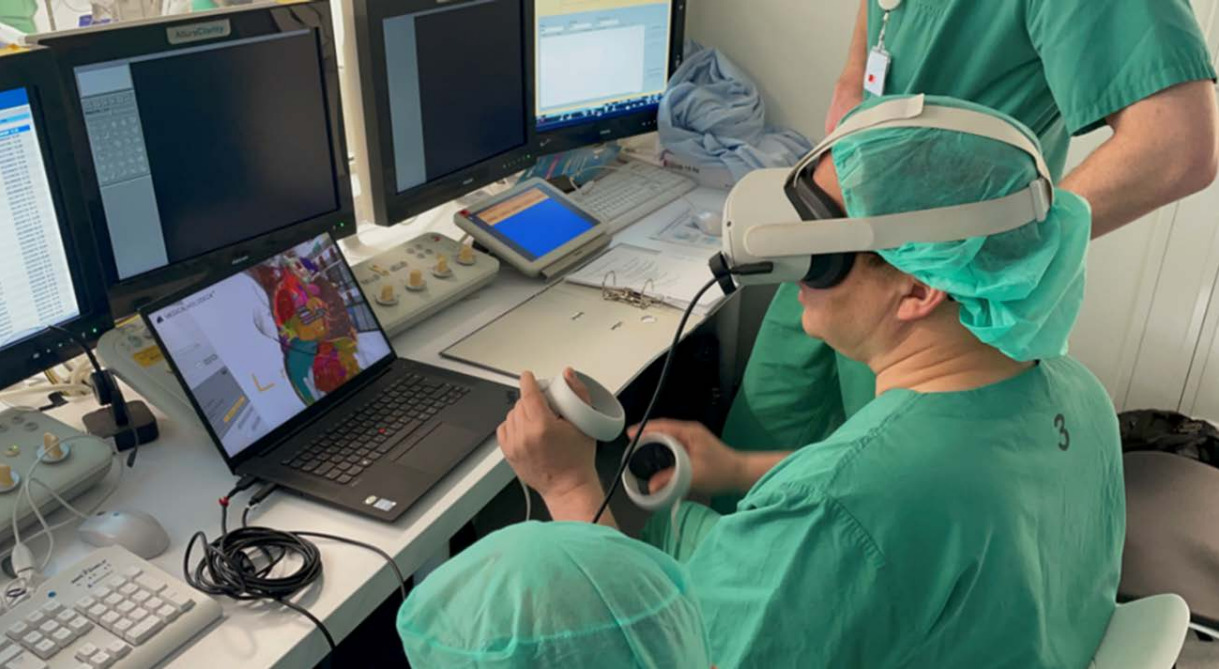

Instead of describing findings verbally or relying on 2D representations, clinicians and students can review the same dataset together in a shared immersive environment. Multiple users can access and interact with the model in real time, each from their own perspective, enabling more effective collaboration and alignment across disciplines.

Creating four-dimensional spatial content

In this case, the instructor used Medicalholodeck’s RecordXR spatial capture toolset to record a guided educational session within a VR environment. The session incorporated spinal landmark identification, neuraxial access techniques, and 3D annotation of pathological anatomy in a patient with severe scoliosis.

Unlike traditional 2D video instruction, this XR-based module preserves spatial reasoning and expert guidance directly within the anatomical context. The instructor's explanations, gestures, and interactions remain linked to the 3D data, creating a more intuitive and engaging learning experience.

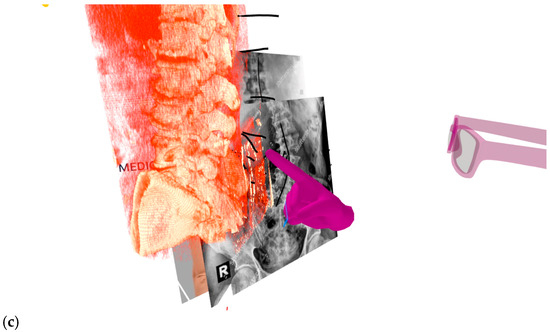

Learners can revisit the session at any time, replay specific steps, or interact with the model themselves to reinforce understanding. Recording content in VR enables educators to build structured libraries of cases, simulations, and teaching materials that are accessible, repeatable, and scalable.

This approach preserves not only visual information, but also expert reasoning, making complex procedural knowledge reproducible and widely accessible.

Shows the learner able to look at the RXR lesson from different angles beyond the point of view of the instructor at the time of filming. The magenta glasses and hands represent the embodiment of the instructor visualized for the learner. The instructor is making the obliquity of the spinous processes on the scoliosis model to provide insight into pathologic anatomy.

From education to clinical application

Beyond education, the same workflows can be directly translated into clinical practice. The combination of AI segmentation, digital twins, recording, and XR enables preoperative planning, interdisciplinary collaboration, and patient-specific simulation within a unified environment.

This continuity between learning and clinical application reduces complexity and supports more informed decision-making, bridging the gap between training and real-world care.

The shift to spatial medical education

By converting medical images into structured 3D data and evolving them into dynamic digital twins, AI enables a new form of learning, one that is immersive, personalized, and directly connected to clinical practice.

Combined with XR, this approach transforms education into a spatial, interactive experience, preparing the next generation of clinicians for an increasingly data-driven and simulation-based future.